“Reverse Citations”: What SEOs/GEOs Need to Know About Ungrounded Citations

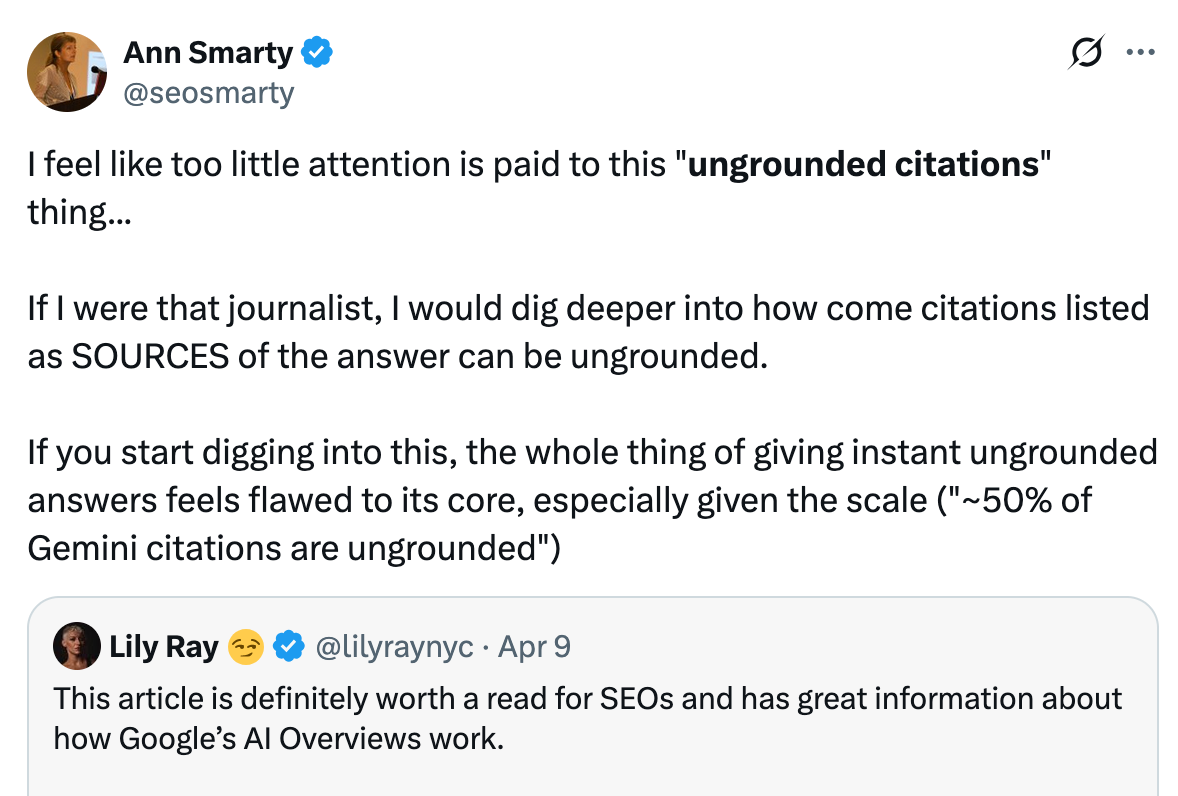

Everyone is optimizing for AI citations these days. But do we actually know how those work?

Last week, the NY Times published an article on how accurate (or rather inaccurate) AI Overviews are.

One topic that the article failed to give more attention to is the so-called “ungrounded” citations that make evaluating AI Overview accuracy challenging.

“Ungrounded citations” are citations that, basically, have nothing to do with the AI answer itself. This is when an AI answers links “to websites that [do] not completely support the information they provide”.

I found it surprising that the whole concept of citing sources that are not, in essence, actual sources didn’t seemingly surprise reporters who are taught to provide trusted sources for their claims.

Before I explain why this concept of ungrounded answers is actually a huge deal for SEO, here are two exciting opportunities for you to join:

>>> JOIN US to discuss LIVE on LinkedIn and ask your questions (and/or get featured!)

The one and only Lily Ray is hosting an AMA on Wednesday, and this is your chance to ask her anything you’ve been trying to figure out! Ask away before it expires!

Source: X

Let’s take a deeper dive into this AI phenomenon because it is not discussed enough, while providing a huge insight into LLM visibility (GEO) as a whole:

What are “ungrounded” citations, and why do I call them “reverse” citations?

An ungrounded citation is a link that an LLM finds after the answer is written.

In other words, the process is reversed here. Instead of using additional infor from URLs it finds, an AI bot will first write an answer based on what it knows and then find URLs that seem to support the answer.

Reverse citations are nothing new.

Back when ChatGPT was just launched, it gave no citations whatsoever. And we demanded. So it started providing URLs that had nothing to do with the answer because the process was reversed:

It writes an answer from the training data

It gives URLs on the topic (seemingly without “reading” those pages).

Since then, LLMs started searching much more. And they seem to sync answers from those URLs in most cases, but the question has always been there:

What comes first? An answer or the citations?

What influences what?

The NY Times article seems to shed some light.

Roughly 50% of Gemini answers are ungrounded

That is, it pulls answers from within its training data. And THEN finds what to cite.

We know (from leaked Claude docs) when LLMs have to search for answers, i.e., when the answer requires more recent knowledge than the training data realistically can have.

In about half of use cases, LLMs are likely to tell you what they already know.

And that is happening for at least two reasons (in my opinion):

Training data gives more unbiased, more correct answers because citations can be manipulated (we are well aware of that)

It is less resourceful than syncing answers from new searches.

Aaron Haynes has an excellent prediction here:

…the part that’s going to get worse: Gemini 3 got more accurate AND more ungrounded at the same time. Those aren’t contradictory - they’re happening at different layers. accuracy is improving in training (the model just knows more). Grounding is degrading in retrieval (the model needs sources less, so it cares about citation fidelity less).

And yet, training data doesn’t store URLs that LLMs once learned answers from. So, to provide you with sources, they need to search for citations that seem to support their answers. And at that point, citations don’t influence answers, nor your brand’s visibility in them.

Why do SEOs need to know this?

Quite clearly, this directly influences the optimization strategy.

If you are only optimizing for “retrieval” (which most SEO / GEO experts seem to talk about, you are very, very far behind.

Product positioning and awareness inside training data are fundamental and crucial for AI visibility. It is also a much more difficult process than simply optimizing for retrieval, and it takes a lot of marketing channels to align with your value proposition.

Digital PR, Reddit marketing, on- and off-site content alignment and visibility - everything should be adding clear, consistent context around your brand, for months and years, loudly enough for LLMs to notice.

If you need help with that strategy, reach out!